|

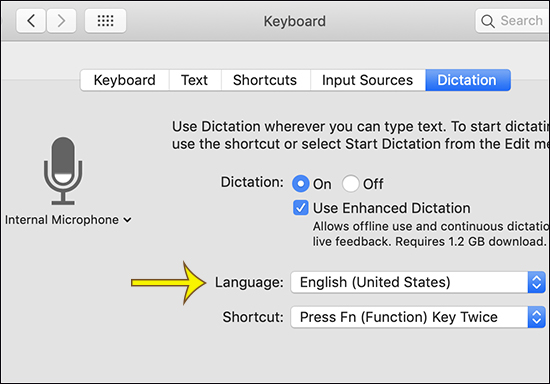

From there it should show a Siri-like microphone or the one that you see on the iMessage keyboard on iOS and iPadOS. After that, simply hit the fn button twice on your Mac’s keyboard. The first thing you’re going to want to do is open a blank Word document on your Mac.Enabling the capability for VoiceThe pop-up menu below the microphone icon in the Dictation pane of Keyboard preferences shows which device your Mac is currently using to listen. If a GrammarRecognizer or KeywordRecognizer is active, a DictationRecognizer can't be active and vice versa. Dictation and phrase recognition can't be handled at the same time. Please note the feature requires an. In this video, I show the recently introduced Dictate talk to type feature add to office 365 version of Microsoft word.

In the Unity Editor, navigate to Edit > Project Settings > Player It uses latest speech to text voice recognition technology and its main purpose is speech to text and translation for text messaging. Dictation - Speech to text allows to dictate, record, translate and transcribe text instead of typing. The Microphone capability must be declared for an app to use Voice input.Find the list in Setup->Manage Custom Words. Click the pop-up menu below the microphone icon, then choose the microphone. Open the Dictation pane for me. Specify which phrases to listen for using a KeywordRecognizer or GrammarRecognizer You'll be asked to do this on device startup, but if you accidentally clicked "no" you can change the permissions in the device settingsTo enable your app to listen for specific phrases spoken by the user then take some action, you need to: Grant permissions to the app for microphone access on your HoloLens device See: Create Grammars Using SRGS XML for file format information.Once you have your SRGS grammar, and it is in your project in a StreamingAssets folder: /Assets/StreamingAssets/SRGS/myGrammar.xmlCreate a GrammarRecognizer and pass it the path to your SRGS file: private GrammarRecognizer grammarRecognizer GrammarRecognizer = new GrammarRecognizer(Application.streamingDataPath + "/SRGS/myGrammar.xml") Now register for the OnPhraseRecognized event grammarRecognizer.OnPhraseRecognized += grammarRecognizer_OnPhraseRecognized You'll get a callback containing information specified in your SRGS grammar, which you can handle appropriately. This can be useful if your app has more than just a few keywords, if you want to recognize more complex phrases, or if you want to easily turn on and off sets of commands. We're adding the "activate" keyword in this example: //Create keywords for keyword recognizer// action to be performed when this keyword is spokenCreate the keyword recognizer and tell it what we want to recognize: keywordRecognizer = new KeywordRecognizer(keywords.Keys.ToArray()) Now register for the OnPhraseRecognized event keywordRecognizer.OnPhraseRecognized += KeywordRecognizer_OnPhraseRecognized An example handler is: private void KeywordRecognizer_OnPhraseRecognized(PhraseRecognizedEventArgs args)// if the keyword recognized is in our dictionary, call that Action.If (keywords.TryGetValue(args.text, out keywordAction))Finally, start recognizing! keywordRecognizer.Start() Types: GrammarRecognizer, PhraseRecognizedEventArgs, SpeechError, SpeechSystemStatusThe GrammarRecognizer is used if you're specifying your recognition grammar using SRGS. It will release these resources automatically during garbage collection at an extra performance cost if they aren't released before that.There are only a few steps needed to get started with dictation:The Internet Client and Microphone capabilities must be declared for an app to use dictation: Once done with the recognizer, it should be disposed using Dispose() to release the resources it uses. Start() and Stop() methods respectively enable and disable dictation recognition. The DictationRecognizer exposes dictation functionality and supports registering and listening for hypothesis and phrase completed events, so you can give feedback to your user both while they speak and afterwards. Private void Grammar_OnPhraseRecognized(PhraseRecognizedEventArgs args)SemanticMeaning meanings = args.semanticMeanings Finally, start recognizing! grammarRecognizer.Start() Types: DictationRecognizer, SpeechError, SpeechSystemStatusUse the DictationRecognizer to convert the user's speech to text.

You can check for these timeouts in the DictationComplete event. Timeouts occur after a set period of time. It will release these resources automatically during garbage collection at an extra performance cost if they aren't released before that. Once done with the recognizer, it must be disposed using Dispose() to release the resources it uses. Start() and Stop() methods respectively enable and disable dictation recognition. DictationRecognizer.DictationResult -= DictationRecognizer_DictationResult DictationRecognizer.DictationComplete -= DictationRecognizer_DictationComplete DictationRecognizer.DictationHypothesis -= DictationRecognizer_DictationHypothesis DictationRecognizer.DictationError -= DictationRecognizer_DictationError Simple local database program for macIf you have multiple KeywordRecognizers running, you can shut them all down at once with: PhraseRecognitionSystem.Shutdown() You can call Restart() to restore all recognizers to their previous state after the DictationRecognizer has stopped: PhraseRecognitionSystem.Restart() You could also just start a KeywordRecognizer, which will restart the PhraseRecognitionSystem as well. If the recognizer has given a result, but then hears silence for 20 seconds, it will time out.Using both Phrase Recognition and DictationIf you want to use both phrase recognition and dictation in your app, you'll need to fully shut one down before you can start the other. If the recognizer starts and doesn't hear any audio for the first five seconds, it will time out.

0 Comments

Leave a Reply. |

AuthorKristen ArchivesCategories |

RSS Feed

RSS Feed